How Data unlocks AI success

Why Data Mastery Separates Winning Consumer AI Startups

As we started exploring the realities of consumer AI businesses, one insight became immediately clear: data is everything. Success isn’t just about the latest algorithms or the most impressive demos. The real differentiation happens behind the scenes, in how companies manage, structure, and protect their data. Before diving into the specifics, we wanted to lay out a simple, big-picture perspective on what really matters in the data-to-AI equation.

The Data Reality Check

Every boardroom has the same conversation these days. The slide deck includes ambitious AI initiatives, the projections show hockey-stick growth, and everyone nods about being "AI-first". But behind the glossy demos and pilot programs hides a far more mundane question that will determine success or failure: are your data systems really ready for it?

Most businesses we talk to have put together their data systems bit by bit over many years, adding new parts and tools whenever needed. It’s a bit like an old house that’s been through many owners and quick renovations. Things mostly work, but no one really knows exactly how it all fits together or which parts are really important. The basics might be okay, but if something goes wrong, it can be hard to figure out what’s causing the problem.

AI systems are like ultra-sensitive appliances. They demand fresh, consistent, trustworthy data delivered almost instantly. Feed them messy information or outdated records and models can get corrupted resulting in data quality issues down the line. Adding another new tool on top of everything else could easily lead to months of unexpected extra work just to fix the problems it causes. And this problem hasn't disappeared with AI. In fact, it's even more relevant today.

Data Chaos in the AI Era

Over the past 18 months, we've watched high-profile incidents where legacy data problems torpedoed AI initiatives: outdated metrics, orders sent to the wrong places, privacy breaches resulting in fines or promising real-time personalization initiatives shelved because of their low impact and high costs.

The pattern is often the same: legacy data systems collide with big dreams of AI and the gap is what causes the whole project to fail.

Before we go into the specifics, let’s agree on the main things any AI system needs when it comes to data:

Fast access to up-to-date information: A customer service copilot needs to surface the customer's purchase history right away so they can respond to user queries instantly. Waiting for last night's ETL (extract, transform load) batch is just not an option.

Data from different places, all in one view: Today's average Series C company runs 40+ SaaS integrations. AI works best when it can connect the dots across all systems, not just in isolation.

Strong privacy and rules: Protecting people’s personal information and following regulations is more important than ever in the context of AI.

On this last point, GDPR fines across Europe reached €1.2 billion in 2024, a strong reminder that poor data management isn’t just a behind-the-scenes issue anymore; it’s something that can damage your company’s reputation and bottom line, especially in consumer businesses. To protect yourself, you need to obfuscate PII (personally identifiable information) before using it, track where your data comes from, and keep records of every AI decision, all without slowing down your business. This can be very difficult to retrofit if you already have large quantities of legacy data that has not been cleansed.

From Patchwork Data to Solid Foundations

Instead of another tool or quick fix, real success comes from building strong data habits, what we call architectural discipline.

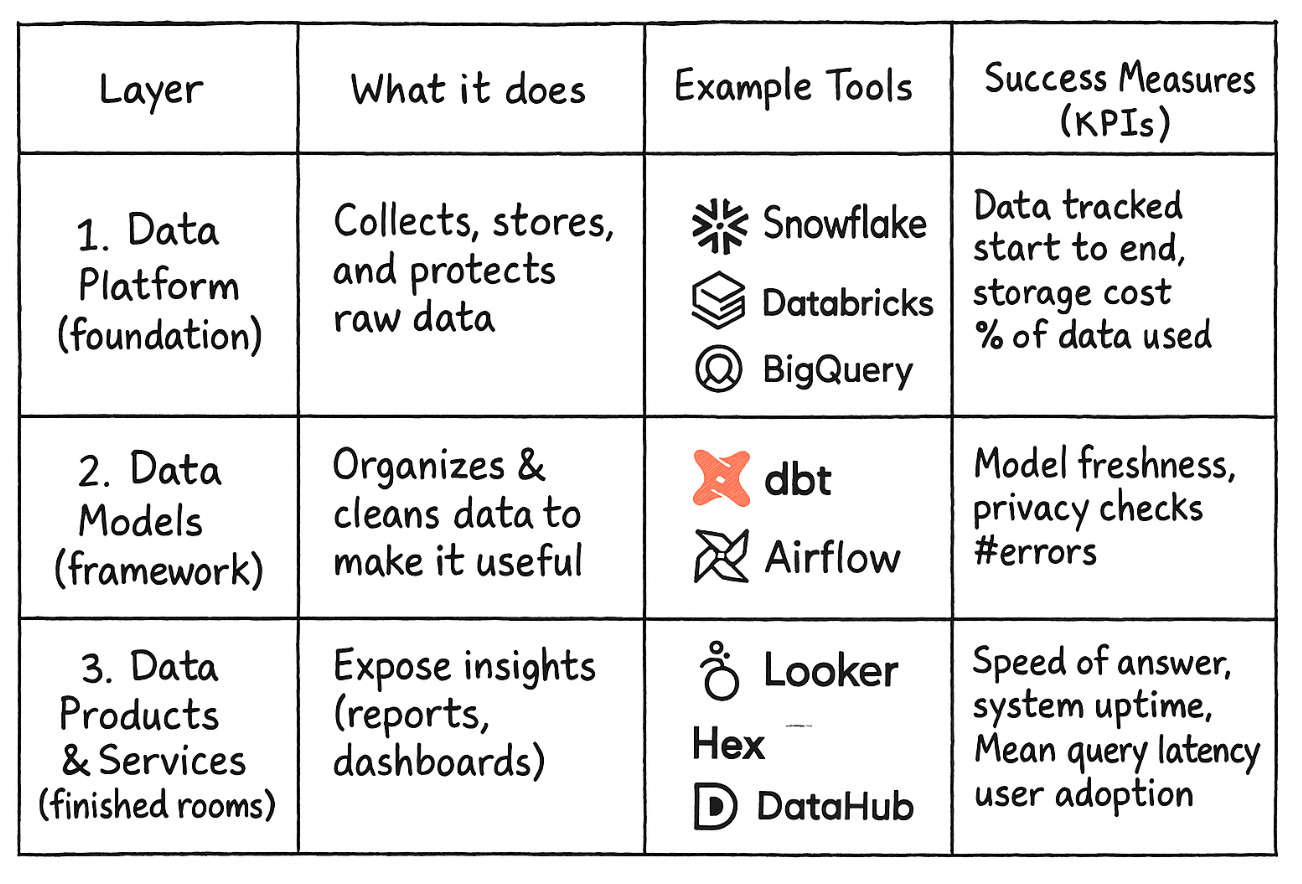

The best companies we work with use a clear, three-layer data model:

Solid base (foundation or Data Platform)

Sturdy structure (framework or Data Models)

Flexible spaces (finished rooms or Data Products and Services)

All connected by the right tools just like a well-designed house. This makes everything work together smoothly and makes it easy for everyone to understand, not just the experts.

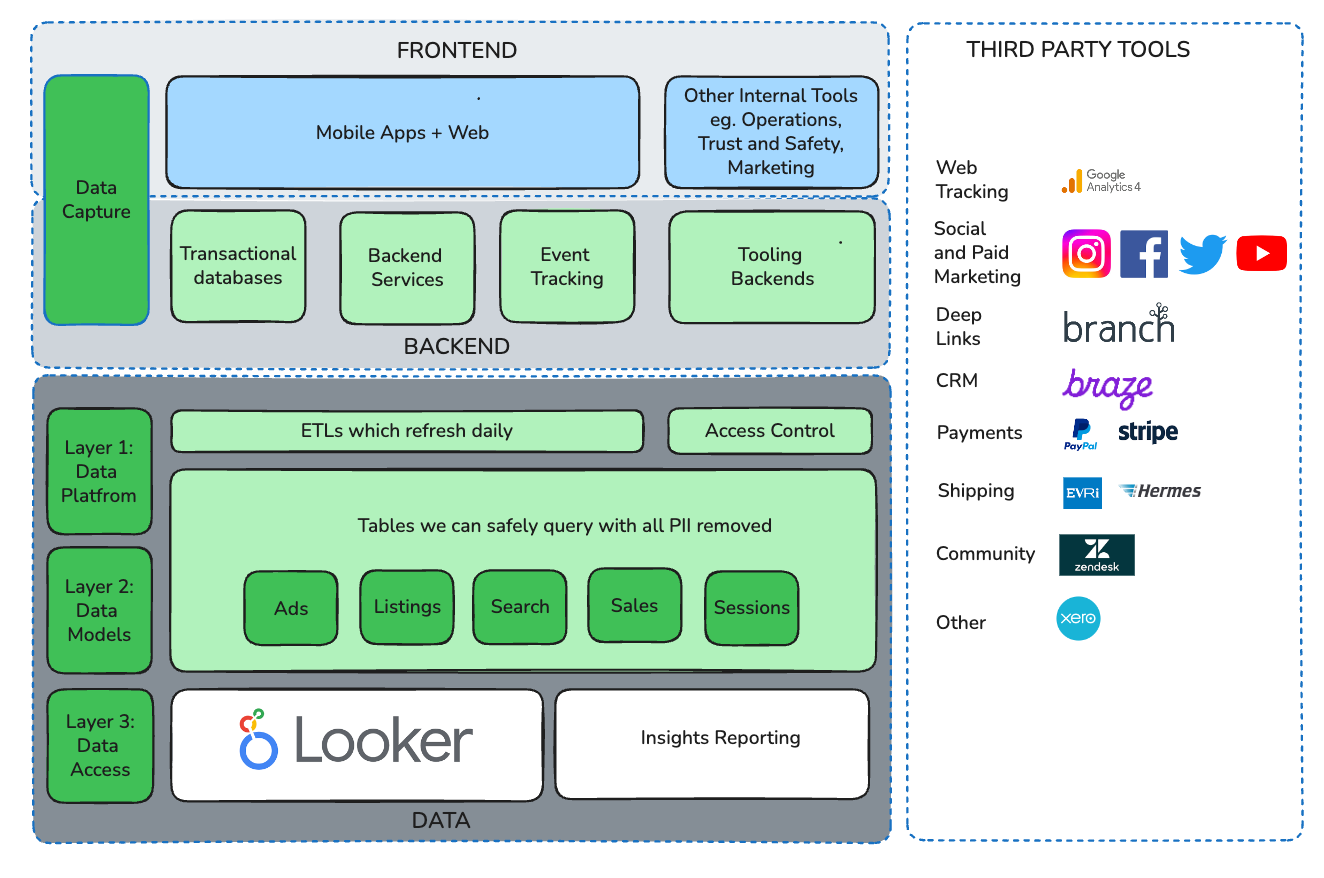

Image created with ChatGPT (DALLE) hence some logos may not be 100% accurate

Now let’s walk through why these layers exist and what happens in them in more detail next:

Layer 1: The Data Platform

Layer 1 is your system of record for all raw data generated by the business. This will include website clicks or app usage, sales and device data but also very often may include unstructured data, transcripts, raw files. It's where data is captured, stored, secured, and governed, typically built on cloud-based data warehouse platforms such as Snowflake, Databricks, or Google BigQuery.

The key questions at this layer are architectural:

Who has access to raw data and how is that access controlled?

What's your hot versus cold storage strategy? (hot = fast access for current/key data vs cold = slow access for rarely used data, for compliance for instance)

Can you trace data lineage end-to-end? (is the ability to track the entire journey of your data)

Treat this layer like a bank vault. You want to minimise the number of individuals with access. As few keys as possible, comprehensive monitoring, strict processes around data access.

Layer 2: Data Models

Layer 2 is where chaos becomes order. Raw data gets transformed into cleansed tables, business concepts and metrics. Tools like dbt, Airflow, and data observability platforms such as Soda or Elementary automate the transformations while checking for freshness and alerting when things break.

The principle here is ruthless standardization: "active user" and "qualified lead" must mean the same thing across every system. Privacy-by-design happens here to strip or pseudonymize PII before being processed into tables that can be used safely by others in the business. This ensures adherence to data privacy regimes such as GDPR.

Layer 3: Data Products and Services

This is where our cleansed data from Layer 2 becomes actionable in the form of dashboards for executives, real-time APIs powering personalization, fraud detection models, and even datasets monetized as products. Speed, reliability, and discoverability matter more than raw computational power.

Critical requirements include versioned APIs with contracts so downstream systems don't break, comprehensive cataloging using tools such as DataHub so teams can discover existing assets before building new ones, and SLAs that match business needs rather than technical conveniences.

The diagram below illustrates some of the key elements within each of the three layers of the stack:

Closing the Gap between Batch and Real Time Data

Most companies struggle here: traditional data systems were built to process information in batches for next-day reports not to respond instantly to customer actions. For example, marketing teams need real-time alerts when someone abandons their cart or a VIP user logs in, but old systems can’t keep up. That’s why businesses often spend extra on workarounds.

One solution is to introduce an Operational Data Hub (ODH). This is like a fast, smart “middle layer” that helps connect slow, old data systems with new, real-time business needs. It quickly pulls in fresh data and sends it where it’s needed right away.

The good news is that modern cloud tools for real-time data are now much cheaper, in some cases 60-80% less than they were just two years ago. But you’ll need tech talent who know how to set up and run these updated systems.

Why AI Makes This Urgent

Generative AI doesn't just consume more data, it fundamentally changes what data architecture must deliver. Three shifts make upgrading from "nice to have" to "business critical":

Real-time becomes table stakes: Large language models personalize better when fed with up-to-the-minute signals. The user's last click, the machine's current sensor reading, the fraudster's latest pattern, these signals decay in minutes, not months.

Integration explosion meets vendor consolidation: AI is being used in every part of business, so companies need to connect more systems than ever. At the same time, big tech vendors are buying up smaller tools, so there are fewer but more powerful platforms. Companies must design their systems to avoid relying too much on just one vendor and to prevent having too many disconnected tools.

Governance moves upstream: When AI models leak training data or exhibit bias, the liability flows back to data controllers under GDPR. Privacy, security, and ethical considerations can't be bolted on after deployment, they must be baked into the data pipeline from day one.

From Blueprint to Reality

Building an AI-ready data fabric isn't a technology project. It's an enterprise transformation program that requires board-level commitment and cross-functional execution.

Name a single owner: Designate a senior executive (Chief Data Officer, CTO, or Chief Product Officer) who's accountable for both the data strategy and end-state architecture, not just tool procurement. Give them formal charter and budget authority.

Think product, not project: Treat your data fabric as an internal product with roadmapped releases, user research with data consumers, and published Service Level Agreements (SLAs). This shifts the narrative from "migration cost" to "platform capability."

Govern by metrics: Track data downtime minutes, model freshness, and AI error budgets in time series alongside traditional business KPIs. Make data quality as visible as sales performance in quarterly board reviews.

Diagnose: Commission an external data architecture audit covering lineage coverage, privacy posture, and real-time readiness. Benchmark your data spend against industry peers, separating storage, compute, and SaaS subscription costs.

Decide: Approve a reference architecture based on the three-layer model. Set concrete SLAs for hot-path data. A sub 5-second event-to-dashboard latency is not unreasonable. Appoint a data governance officer who reports quarterly to your audit committee.

Deliver: Start by building a high-impact project (a “lighthouse use case”) that shows off the new data system in action. Good examples are: Real-time marketing alerts (like messaging a customer as soon as they abandon their cart) or Smart fraud detection powered by AI.

The Opportunity Cost

If you put off change, you’ll get left behind and forced to catch up later. Companies that are slow to adopt new technology (like mobile in 2010 or cloud in 2015) end up spending more on quick fixes and don’t get as much real value.

Real-time AI raises the stakes even higher. Your most valuable business signals customer intent, system anomalies, market shifts decay in minutes, not months. Meanwhile, regulatory pressure intensifies weekly as authorities worldwide scrutinize AI data practices.

The companies that treat AI-ready data architecture as tomorrow's problem will spend tomorrow firefighting outages, regulatory fines, and competitive disadvantages. The companies that act now will unlock faster product cycles, richer customer experiences, and defensible competitive moats.

Your Data Strategy Is Your AI Strategy

Cash might be the water that keeps companies alive, but data is the blood circulating intelligence and context to every business function. Most organizations are operating with clogged arteries, legacy architectures that worked for yesterday's batch analytics but buckle under AI's real-time demands.

The choice is binary: develop a deliberate data strategy architected for AI-ready data fabric, or accept that your AI initiatives will remain expensive experiments rather than business transformations.

Your data infrastructure isn't just information plumbing. It's the load-bearing foundation of every AI ambition to come. Build it like your competitive future depends on it, because it does.

The time for planning is over. Why not kick off that data architecture audit in the next 30 days?

We’d love to hear your thoughts, stories, or discoveries from the front lines of consumer AI. Just write a comment and/or share your experiences below.

Stay tuned for more insights coming soon.

And in the meantime, enjoy the summer ☀️🕶️ 🍉!

Tools used this week

Perplexity Comet - we are loving it, Assistant the hell out of it!

Our previous posts