Google Strikes Back

Gemini 3 resets the competitive map and puts real pressure back on OpenAI.

Why Gemini 3 matters now

Thinking about the main themes for 2026, clearly one will be the heating up of the Gen AI competitive landscape. Gemini 3, released a month ago, feels like a real turning point. For three years, OpenAI defined both the pace and the public narrative. Gemini 3 signals that the market is contested again, and that the winners may be decided by full stack execution, not just model quality.

This post unpacks why Gemini 3 matters, how Google arrived at this moment, and what it signals for the broader AI ecosystem. Specifically, we will consider:

Google’s ramp-up: the strategic, organisational, and technical moves that led up to this point

Google’s current position and how and how its full AI stack compares to OpenAI

The implications for industry dynamics and end users

We also consider how OpenAI may respond. Soon after Gemini launched, reporting suggested OpenAI entered an internal “Code Red”, an all hands push with resources redirected toward improving areas where Google was advancing quickly, especially image generation.

Source: DALL-E image generated (not Nano Banana)

For today’s post, we collab with Ryan Popa, AI architect and former CTO at Karhoo, helped out with this post. Ryan has a particular interest in how AI is changing commerce, software development, and the future. He likes to dig into technical details and understand AI at the most fundamental level. He also has a lot of experience of working with Google cloud, data and AI technologies.

How Google got back in the game

Google’s founding mission “to organise the world’s information and make it universally accessible and useful” has always implied an ambition beyond search. Over two decades, it enabled Google to attract exceptional talent and sustain long term research cycles at a scale few can match.

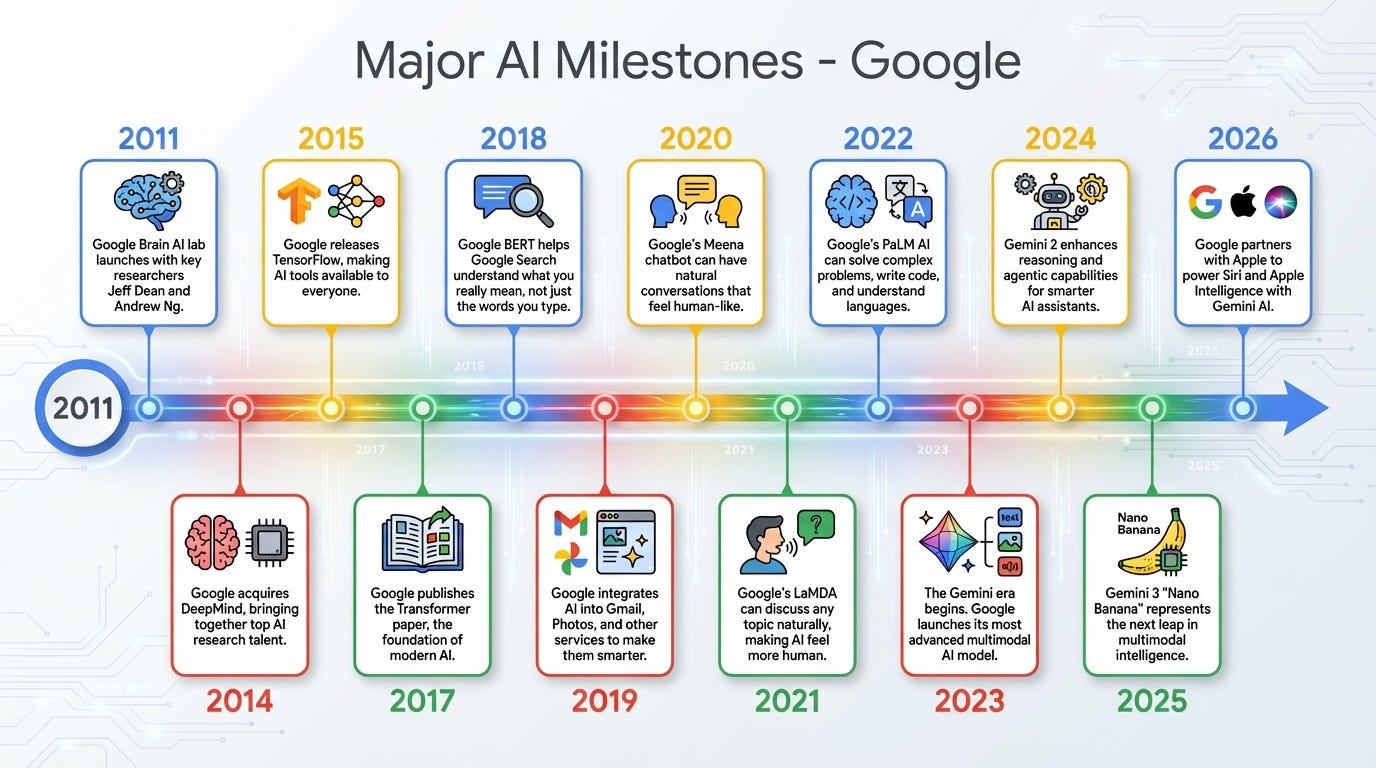

As discussed in our last CxAI post, Google’s search dominance generated entire industries. In parallel, Google was investing quietly but deeply in AI foundations. The milestones shown in the accompanying image illustrate this trajectory and provide essential context for why Gemini’s moment matters now.

Source: CxAI (Image created with Nano Banana)

A key inflection point came in 2011 with the creation of Google Brain by Andrew Ng, Jeff Dean, and Greg Corrado. Incubated within Google X (the company’s moonshot lab), the initiative aimed to push neural networks out of academia and into large scale production systems. That internal bet was reinforced in 2014 with the acquisition of UK based DeepMind, which brought world leading reinforcement learning talent and a long term AGI vision into Google.

Google also invested heavily in the wider AI ecosystem by open sourcing TensorFlow in 2015, helping democratise machine learning and accelerate deployment across products like Translate and Photos. Less visible but strategically crucial was its parallel push into custom hardware. Tensor Processing Units tightly integrated software and infrastructure, creating a vertically aligned AI stack that is now emerging as a major competitive advantage.

The most consequential breakthrough arrived in 2017 with the publication of “Attention is All You Need” by researchers from Google Brain and Google Research. The Transformer architecture it introduced underpins virtually all modern LLMs, including OpenAI’s GPT series.

Despite leading the original breakthrough, Google was slow to turn it into products. Several key researchers left to build startups such as Character.ai or Cohere, while internal caution and organisational fragmentation kept research and product teams loosely connected. This created an opening that OpenAI exploited between 2019 and 2023 with a product first approach, culminating in ChatGPT becoming the public’s main entry point to generative AI.

As Parmy Olson notes in her book, Supremacy, this divergence reflected a philosophical split. Demis Hassabis (DeepMind co-founder) viewed AGI as a scientific unlock, while Sam Altman framed it as a route to economic abundance. That difference shaped strategy and timing, defining the competitive landscape for several years.

Google’s decisive re-entry came in 2023 with the merger of Google Brain and DeepMind into a single organisation, Google DeepMind. The move was not just structural; it unified talent, aligned compute and infrastructure, and integrated model development, hardware design, and deployment into a single full-stack system. Under Hassabis, DeepMind set technical direction while the former Brain group contributed deep infrastructure and TPU expertise.

This merger enabled Google to focus on a single foundational model family, Gemini. Built natively on TPUs and designed for multimodality and reasoning, Gemini was conceived as a platform layer for Search, Cloud, Gmail, and beyond. While early releases were deliberately conservative, Gemini 2.5 earned a reputation among practitioners for coherence, reasoning depth, and consistency in real-world use.

Gemini 3 vs. OpenAI: the stack battle

Google’s position at the start of 2026 looks markedly different from even a year ago. What was once widely characterised as conservative posture has given way to renewed confidence in Google’s technical leadership.

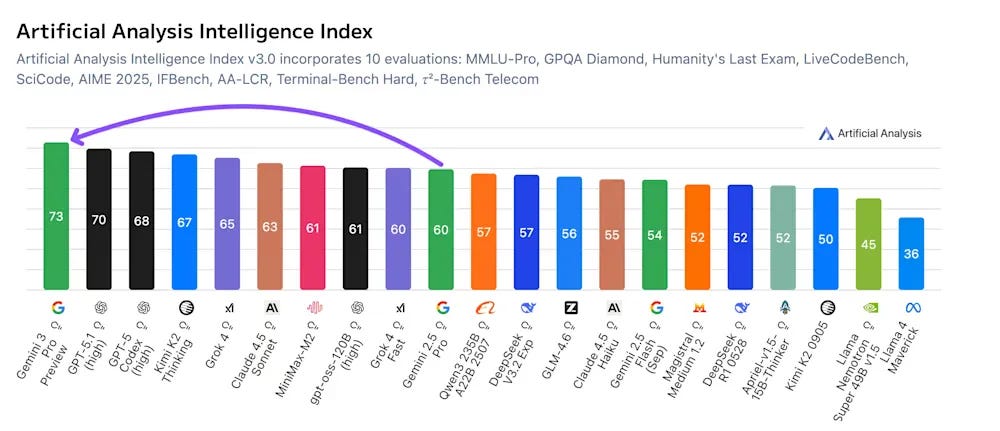

Gemini 3, launched in November 2025, represents a substantial advance in model capability. Independent benchmarks indicate that Gemini 3 outperforms GPT-5.1 across several dimensions. Gemini 2.5 had already established itself as a fast, reliable, and widely adopted model, though it was still often perceived as trailing the GPT-5 family at the frontier. With Gemini 3, that perception has materially changed. In some areas, the gap has closed; in others, it has reversed.

Former Google Chief Decision Scientist Cassie Kozyrkov suggested that “for the first time in years, Google is not chasing the frontier... it *is* the frontier.”.

Even Marc Benioff, CEO of Salesforce is sold:

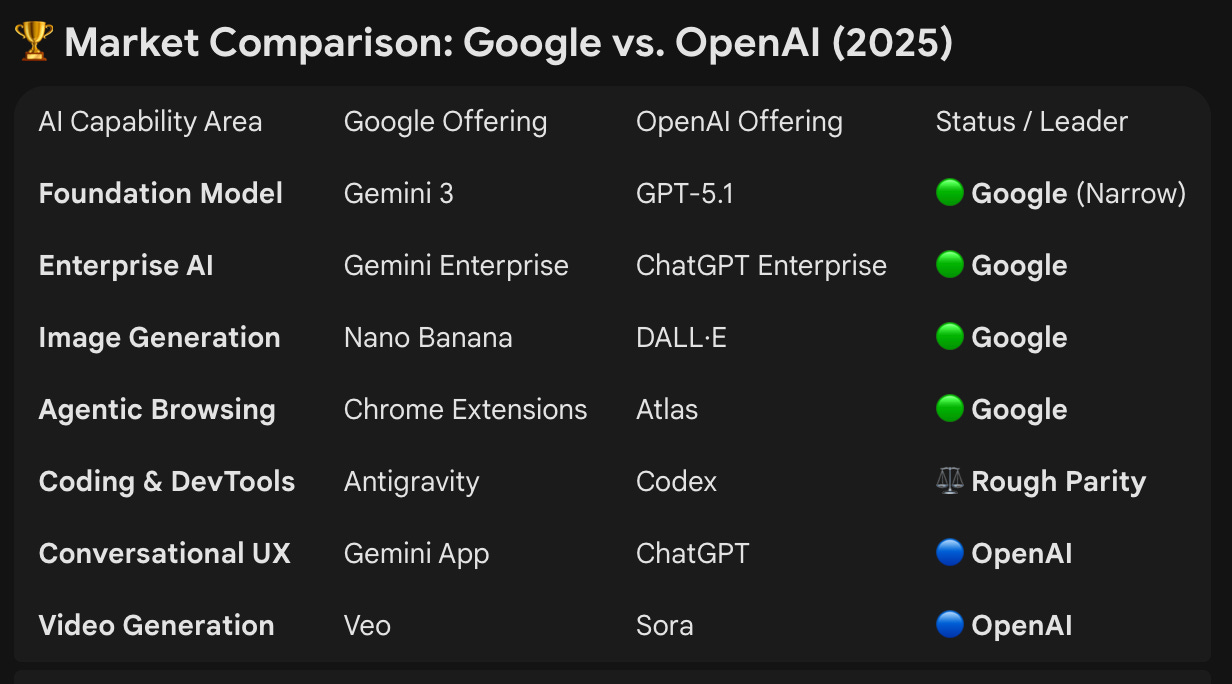

This reassessment is not limited to benchmark enthusiasts. Enterprise practitioners and platform builders who have evaluated Gemini 3 in production environments report improvements that matter operationally rather than cosmetically. The table below provides a non-scientific comparison of Google and OpenAI across key AI product categories, based on technical capability, and early practitioner feedback:

Source: CxAI using ChatGPT

Google has continued the momentum into the first two weeks of 2026 with the recent announcement of a new protocol called UCP to support agentic shopping which will be integrated into “Gemini apps to let shoppers check out directly from U.S.-based retailers while researching a product”. They also just announced in the last few days they will be partnering with Apple on AI.

“Google’s Gemini will power the company’s Apple Intelligence features, including the delayed overhaul of Apple’s lagging Siri voice assistant.”

Four dimensions that explain the shift

There are 4 main dimensions, we believe, have allowed Gemini 3 to outpace the competition:

Multimodality: one model that handles text, images, audio, video, and structured data

Staying power: better intent tracking, tool use, and long workflow consistency

Long context: can ingest and use very large documents and transcripts in one pass

Developer strength: strong code generation, debugging, and refactoring help

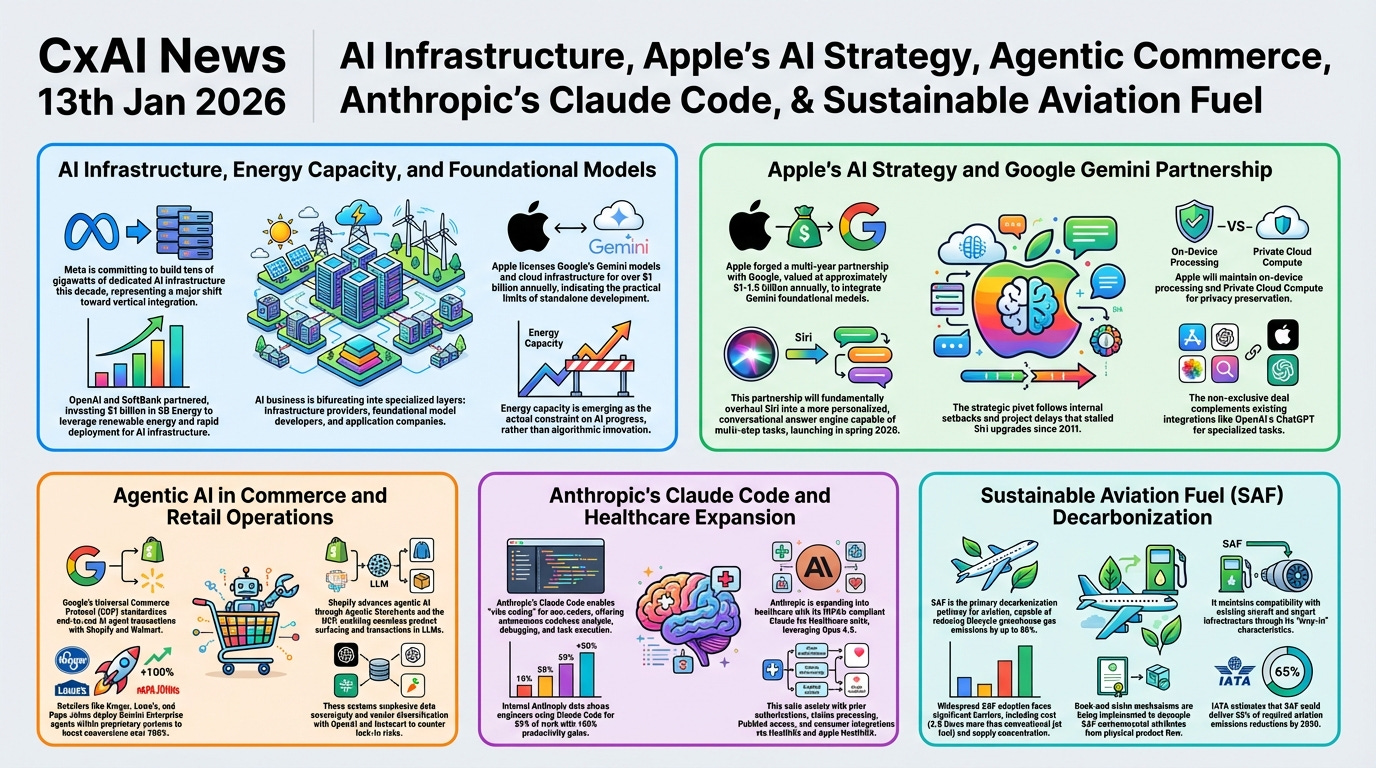

We have been using Gemini 3 directly, along with several applications built on top of it, including Nano Banana, and the results are consistently strong. A good example is the infographic we generate daily for the CxAI news report. It is generated in just over 30 seconds using Nano Banana with a complex 16k character prompt that includes the report content plus strict formatting instructions.

Here is one generated from yesterday’s CxAI report using the gemini-3-pro-image-preview invoked via Google’s GenAI API:

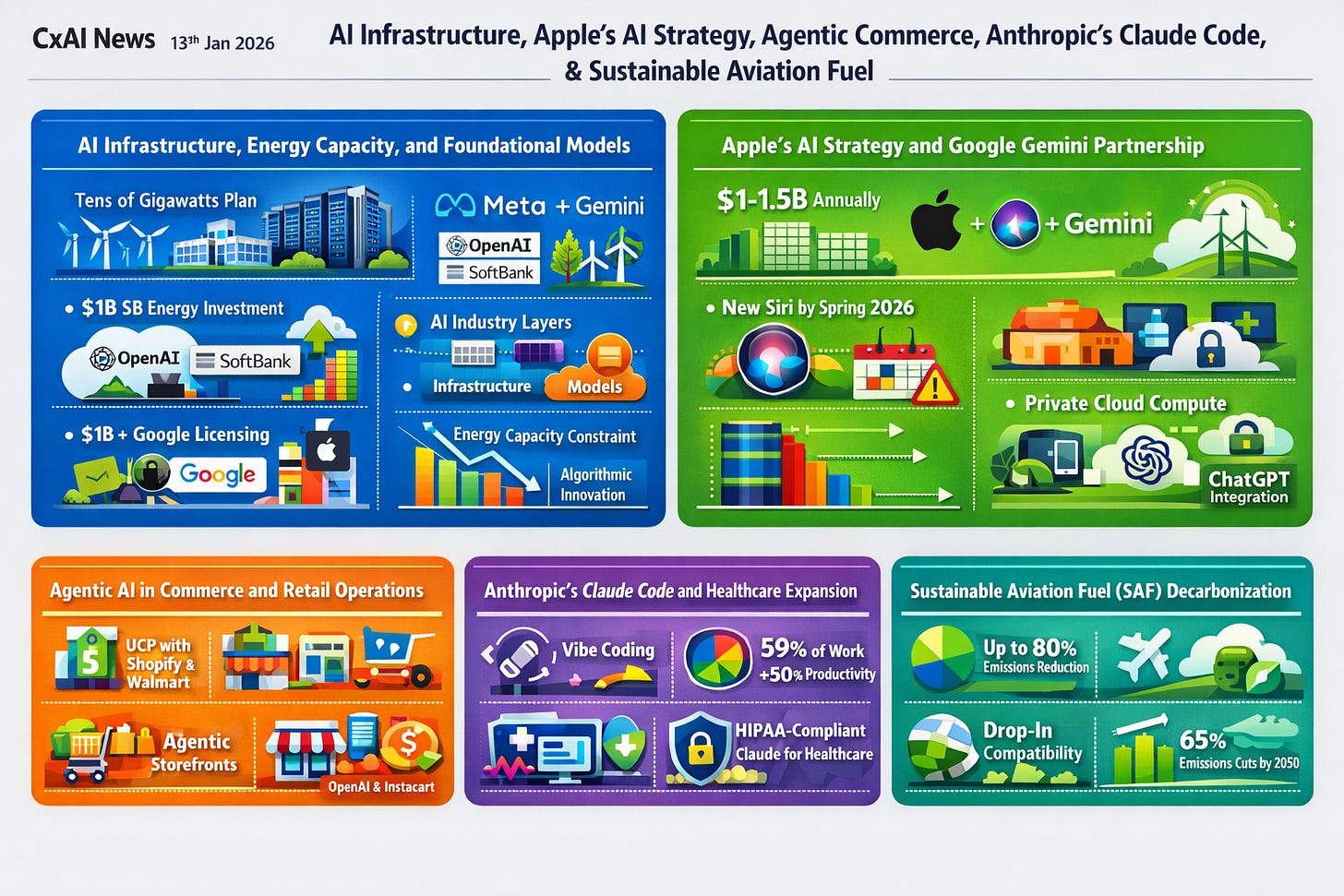

Here is the image generated from the exact same prompt in around 60 seconds using OpenAI’s new gpt-image-1.5 model via their API which requires organisation verification first. Both were run with the same prompt and similar layout constraints, but image models can vary meaningfully from run to run.

In our tests, the Google output has been more reliable at preserving dense text and layout fidelity, which matters for workflow automation rather than aesthetic demos.

Where Google still stumbles

Despite its technical progress, Google still faces meaningful challenges at the product and ecosystem level, and these could determine whether it wins mindshare, not just benchmarks.

A consumer habit gap

ChatGPT continues to command significantly higher daily usage and mindshare than the Gemini app. Some estimates suggest Gemini’s DAU to MAU ratio sits in the high single digits, indicating weaker habit formation than ChatGPT. Gemini attracts enormous reach, but it has not yet become the default daily assistant for most users.

A fragmented product story

Gemini lives at gemini.google.com/app, and Google’s AI experience remains fragmented across multiple surfaces:

• The Gemini chat interface

• Traditional Google Search

• Google Search AI Mode

These can produce different answers to the same query, with different formats and levels of depth. Ask “how many users does Gemini have daily” across these surfaces and the inconsistency becomes immediately apparent. That is not a model problem, it is a product coherence problem.

This fragmentation also creates a packaging challenge. Many users still do not know which Gemini they are using, what model powers which surface, and which features are gated behind which subscription tier. Packaging matters because it shapes trust, expectations, and repeat usage.

Usage is hard to measure

Usage data is also difficult to interpret. Gemini is embedded across Chrome, Workspace, Android, and Search, so many users may benefit from Gemini’s reasoning multiple times per day without consciously “using Gemini.” While this integration is strategically powerful, it makes adoption harder to measure and the consumer story less clean than ChatGPT’s.

Trust and reliability debates

There are also credible technical critiques. Perplexity and other evaluators have flagged higher hallucination rates, weaker instruction following, and a less mature developer ecosystem compared with GPT 5.2. Some cited figures suggest hallucination rates above 70% in certain tests, dramatically higher than those reported for ChatGPT 4o.

Developer tooling momentum

Coding is another area where Google appears to be losing momentum with developers. Claude, particularly with Claude Code and Opus 4.5, has rapidly become a preferred assistant for many. This matters disproportionately because code is both a high value use case and a critical source of training data, so momentum compounds quickly.

Despite the above, Similarweb data suggests Gemini has grown to about 21 percent of web traffic to AI chat products by January 2026, versus about 65 percent for ChatGPT. That shift reflects distribution and visibility more than a sudden reversal in model quality, but it is still a meaningful gain.

OpenAI’s response

OpenAI responded quickly, shipping three visible updates in December 2025:

GPT 5.2 released December 11, 2025: Positioned as a step up in day to day reliability plus stronger reasoning and coding

GPT Image 1.5 released December 16, 2025: Rolled out in ChatGPT and the API, clearly aligned with the “close the gap on images” priority

GPT 5.2 Codex released December 18, 2025: A push on agentic coding workflows, long horizon changes and professional engineering use

Some coverage suggested OpenAI deprioritised advertising initiatives during the Code Red focus, reinforcing that the immediate goal was product quality and retention, not new monetisation experiments.

What happens next: monetization moves into answers

Google looks unusually well positioned for the next phase of the AI market, not just because Gemini is strong, but because Google can turn capability into distribution and revenue at scale.

Four structural advantages we observe:

Financial strength: Large cash reserves allow Google to self fund AI investment, absorb volatility, and keep playing a long game

Full stack control: Own silicon plus TPUs plus infrastructure means lower unit costs, tighter optimisation, and less dependence on external GPU supply

Built-in distribution: Search, Gmail, Chrome, Android, and Workspace give Google instant deployment surfaces to billions of users, often without asking them to change tools.

Unique data and intent signals: Search and commerce intent, plus productivity context, create an advantage for personalisation and monetisation that most competitors cannot match

Taken together, these factors underpin Google’s position as the most vertically integrated AI company operating at global scale.

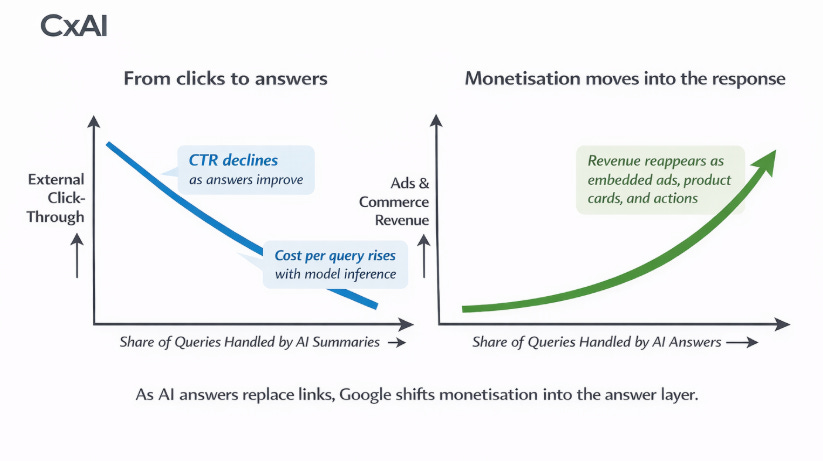

The innovator’s dilemma becomes the strategy

Google’s AI Mode reduces traditional search click through and pushes cost per query up, which pressures the legacy ads engine. The likely resolution is monetisation inside the answer itself: more ads, more product placement, and more transactional flows embedded directly in Gemini and AI Mode. This is less a capability gap than a product and monetisation transition, and that transition may become Google’s biggest advantage.

For OpenAI, this presents a structurally asymmetric challenge. The company retains exceptional model talent and strong consumer mindshare, but operates without comparable control over infrastructure or distribution. Its valuation and strategic flexibility are shaped by high expectations for future revenue growth targeting $100bn by 2030, which may limit optionality if competitive pressure intensifies.

A key strategic question is how competitors respond beyond incremental model improvements. OpenAI has signalled that enterprise offerings will be a top priority focus, but competing against Google’s integrated data, productivity, and cloud stack will be challenging. Hardware represents a potential wildcard. A rumoured OpenAI device, reportedly developed with Jony Ive, could open a new front in consumer AI though success would depend heavily on execution, ecosystem fit, and sustained model advantage. Or the introduction of long term memory to create a true personal assistant, we all need in our lives, could also be an angle players will explore.

The question that really matters

The market is no longer about who has the best model this month. It is about who can build the most durable system around it.

Who becomes the default interface for daily work, search, and shopping?

Who can monetise answers without breaking trust?

Who can compound advantage through distribution, tooling, and ecosystem lock in?

If Gemini becomes the place where intent turns into action and transaction, Google does not just “catch up” to OpenAI, it changes where the profit pool sits.